Why enterprises need AI-native governance across data, models, and infrastructure before risk becomes systemic exposure.

The rapid transition of artificial intelligence from experimental labs to production environments has outpaced the development of standard security protocols. For the modern enterprise, establishing a resilient and secure MLOps workflow is no longer a technical preference; it is a governance requirement. While DevOps revolutionized software delivery through automation, the introduction of machine learning adds a layer of complexity that traditional pipelines are not equipped to handle without a dedicated AI safety framework.

In the absence of a structured framework, teams often rely on fragmented processes that favor speed over safety. This creates an environment where unvetted models, poisoned data, or unauthorized configurations can be deployed to production without detection. For the CISO and Chief Risk Officer, the challenge is ensuring that the “black box” of AI does not become a source of systemic exposure due to poor AI risk management.

The core issue is that many organizations treat AI as standard software. In reality, the non-deterministic nature of models means that security must be embedded in the model’s behavior and its lineage, not just in the code that wraps it. Failure to secure this MLOps workflow represents a strategic blind spot that exposes the enterprise to unmanageable risk.

The Invisible Complexity of the AI Attack Surface

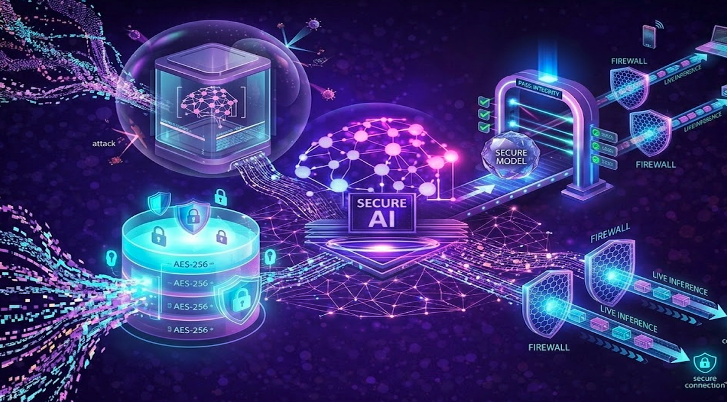

A secure MLOps workflow must cover a significantly broader surface area than traditional CI/CD. In standard software, security focuses on code vulnerabilities and access. In AI, the risk is distributed across three distinct planes: the data, the model, and the infrastructure.

Traditional approaches often stop at the container level. However, in the AI era, adversarial robustness must be validated at every stage. If an adversary compromises a training set or a model registry, the resulting “secure” container will simply serve as a malicious intelligence. A comprehensive MLOps strategy ensures that the integrity of the model weights is as protected as the code itself.

| Dimension | Traditional Approach | AI-Era Reality |

|---|---|---|

| Attack Surface | Fixed perimeters and known ports. | Dynamic, distributed, and model-specific. |

| Trust Model | Binary (Authorized vs. Unauthorized). | Probabilistic and context-dependent. |

| Integrity Check | Static Analysis (SAST/DAST). | Adversarial Robustness & Bias Testing. |

| Provenance | Version control (Git). | Model lineage of data, code, and weights. |

Silent Failures and Logic Drift: How AI Compromise Actually Spreads

In an insecure MLOps environment, risk often manifests through silent failures during the promotion phase. Without a hardened workflow, a model may appear to perform well on test data while lacking adversarial robustness, leaving it vulnerable to prompt injection or evasion attacks that only appear once it interacts with live traffic.

Compromise often spreads through shadow deployments, where data scientists bypass security gates to meet deadlines. This leads to a breakdown in model lineage, making it impossible to identify which model version is running or what data it was trained on. When an incident occurs, the lack of a secure MLOps workflow renders root cause analysis futile.

Systemic exposure also occurs through model drift. A model that was safe at deployment may become unsafe as real-world data evolves. Without automated feedback loops integrated into your MLOps pipeline, this degradation happens in the dark, impacting customer trust without ever triggering a traditional “system down” alert.

The Compliance Gap: Why Legacy Security Is Blind to Probabilistic Risk

Current security and compliance frameworks are largely built for static logic. They excel at identifying a vulnerable library but are blind to the logic vulnerabilities that AI risk management is designed to catch. Legacy tools are designed for systems programmed, not for systems trained.

Traditional controls fall short because they do not account for the black box nature of machine learning. They lack the capability to inspect the internal alignment of a model or to verify that the model lineage remains untampered from staging to production. Most organizations are failing to ask: “How do we prove the model hasn’t been tampered with between the training server and the production API?”

The blind spot lies in the middle of the lifecycle, the space between the code and the output. Relying on legacy tools to govern MLOps is like using a lock to protect a conversation; it may secure the room, but it does nothing to ensure the integrity of the message.

The High Cost of Unmanaged AI

For the board of directors, the implications of unmanaged AI risk management are not theoretical. Regulatory bodies are increasingly focusing on AI transparency, requiring enterprises to explain how their models reach specific decisions. Without a secure, documented MLOps workflow, providing this transparency is operationally impossible.

Customer trust is the most fragile asset in the AI economy. A single public failure, whether a biased model or a leaked dataset, can erase years of brand equity. Furthermore, operational continuity is at stake. If an enterprise becomes overly dependent on an AI system that lacks an AI safety framework, the inability to execute a rapid, secure rollback can lead to prolonged service outages.

The Four Pillars of a Hardened AI Lifecycle

Operationalizing governance requires moving away from manual intervention toward automated, verifiable gates within your MLOps pipeline. Effective governance is not about slowing down the process. It is about building a faster, safer lane for innovation.

A mature MLOps strategy requires:

- Visibility: A comprehensive inventory of all AI systems and their model lineage.

- Continuous Evaluation: Testing for adversarial robustness as a standard promotion gate.

- Operationalized Approvals: Hardened gates that prevent a model from being promoted without meeting an AI safety framework.

- Traceability: Detailed documentation that connects model outputs back to specific datasets and training runs.

Unified Visibility and Continuous Oversight With Cranium

Cranium provides the foundational platform for this new era of AI-native oversight. By unifying the visibility and security of AI systems, Cranium enables enterprises to move from exposure to resilience.

Through Cranium Arena, organizations can conduct model evaluation and adversarial red-teaming as a standard part of the promotion cycle. Detect AI continuously monitors the environment, identifying anomalies in model behavior that traditional security tools miss. Finally, Cranium’s automated documentation and AI Cards provide the traceability required for regulatory compliance and internal accountability.

We provide the grounding for AI, ensuring that every model in the enterprise is as secure as it is aligned with business objectives.

Bottom Line

A resilient and secure workflow is the only way to scale AI without introducing unacceptable enterprise risk. Relying on ad hoc processes and legacy security tools creates an environment where failure is not a matter of if, but when.

The governance gap in AI is a structural problem that requires an architectural solution. To protect the integrity of the business, security and MLOps must be fused into a single, continuous discipline.

True resilience comes from having the visibility to see a threat, the documentation to prove compliance, and the controls to act before a risk becomes a crisis.

Explore how Cranium helps enterprises govern and secure AI systems: cranium.ai