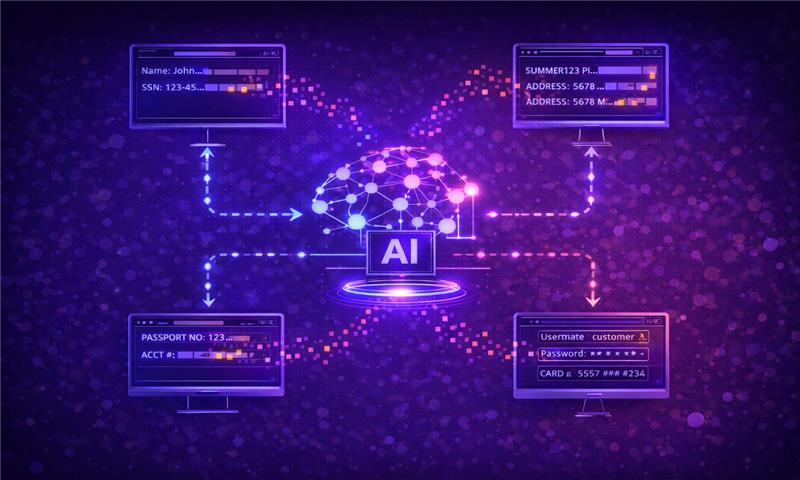

Because modern AI isn’t built in one place, it’s assembled from everywhere.

AI systems don’t arrive fully formed. They are assembled from data sources, pretrained models, open-source libraries, orchestration frameworks, third-party APIs, and cloud services. That modularity is what makes AI scalable and innovative. It’s also what makes it fragile.

When one component is compromised, influenced, or poorly governed, the impact rarely stays contained. It quietly travels downstream, into decisions, outputs, and customer-facing systems, often without triggering traditional security alerts. That’s the core risk of AI supply chain compromise: the vulnerability is distributed, but the accountability is not.

The AI Supply Chain Is Bigger Than Most Teams Realize

In traditional software, supply chain risk typically centers on code dependencies and build pipelines. AI expands that surface dramatically.

The AI supply chain includes training datasets, pretrained foundation models, fine-tuned derivatives, model weights, open-source ML frameworks, vector databases, inference endpoints, plugins, orchestration tools, and the downstream applications that consume model outputs. In many cases, it also includes services your AI calls dynamically during runtime.

Each layer introduces dependencies. Each dependency introduces trust.

Unlike software packages, AI components are not always transparent. You may know which model you’re using, but not what data shaped its behavior. You may trust a vendor’s API, but not have visibility into how it evolves or what it relies on internally. The chain extends beyond what the documentation suggests.

How Compromise Spreads Quietly

An AI supply chain compromise rarely announces itself with a system outage. More often, it influences behavior subtly.

A poisoned dataset can skew outputs without reducing model accuracy metrics. A compromised pretrained model can embed unsafe behaviors that activate only under certain prompts. A third-party AI API can alter behavior or introduce vulnerabilities without a formal deployment in your environment.

From the outside, the system continues functioning. From a governance perspective, it may already be operating outside intended boundaries.

This is what makes AI supply chain risk uniquely dangerous: inherited weaknesses travel silently across systems and decisions. The damage accumulates gradually and is often discovered only after customer impact, compliance exposure, or reputational harm.

Open-Source Acceleration, Governance Lag

Open-source is foundational to AI development. Foundation models, datasets, and tooling evolve rapidly in public ecosystems. That speed drives innovation, but it also widens the attack surface.

Security research has already demonstrated malicious model repositories, poisoned ML packages, and compromised training artifacts distributed through trusted channels. In 2023 and 2024, researchers identified multiple instances of machine learning models on public repositories containing hidden backdoors or embedded malicious logic.

Traditional open-source security practices focus on scanning for known vulnerabilities in code. They don’t assess how a model was trained, whether its weights were tampered with, or how it behaves under adversarial conditions.

When organizations integrate these components without deeper validation, they inherit risks they cannot easily see.

Third-Party AI Services Create Invisible Dependencies

Modern AI systems increasingly rely on external APIs for inference, enrichment, or orchestration. These services are powerful and outside your direct control.

If a vendor updates a model, modifies guardrails, or experiences a breach, your systems may immediately inherit that change. Unlike software deployments, these updates can occur dynamically, without your team pushing a new version.

That creates a new category of supply chain dependency: real-time behavioral drift driven by external systems.

Traditional monitoring tools may confirm uptime and API availability. They cannot verify that outputs remain safe, compliant, or aligned with policy.

Why Traditional Security Falls Short

Software supply chain security has matured. SBOMs track dependencies. Code signing verifies integrity. CI/CD pipelines enforce checks. Those controls still matter. They’re just incomplete for AI.

AI risk does not reside only in code. It lives in training data, model weights, inference behavior, and dynamic interactions. A signed model artifact does not prove that the training data is clean. A verified dependency list does not reveal adversarial susceptibility. A secure build pipeline does not protect against compromised downstream APIs.

Traditional security answers the question, “Was this code altered?” AI governance must answer the question, “How does this system behave, and why?”

Without that behavioral and lineage visibility, organizations operate with partial assurance.

When Supply Chain Risk Becomes Enterprise Risk

For CISOs and security leaders, AI supply chain compromise is not theoretical. It becomes real the moment AI influences customer outcomes, financial decisions, regulatory reporting, or operational workflows.

Regulators increasingly expect transparency into how AI systems are built and governed. The EU AI Act, for example, emphasizes documentation, traceability, and risk management across the lifecycle. If a compromised component introduces bias or unsafe behavior, responsibility does not stop at the vendor boundary.

Customers rarely care which upstream dependency caused harm. They care that it happened.

That means AI supply chain governance is no longer optional. It’s a prerequisite for deploying AI responsibly at scale.

What Securing the AI Supply Chain Actually Requires

Effective AI supply chain security starts with visibility. Organizations must understand which models exist, where they originated, how they were trained, and which services they depend on.

But visibility alone is not enough. Components must be evaluated behaviorally. Models should be tested against adversarial scenarios and misuse cases before deployment. Updates must be validated before trust is extended. Runtime behavior should be monitored continuously, not assumed stable.

Governance must be operational, not theoretical. Documentation, traceability, and accountability must be embedded into workflows, not assembled retroactively during audits.

This is where AI-native oversight becomes critical.

Where Cranium Fits

Cranium helps enterprises bring structure to AI supply chain governance without slowing innovation.

By providing visibility into AI systems and their dependencies, organizations can understand where components originate and how they connect. Through testing capabilities such as Cranium Arena, teams can evaluate models before they propagate risk downstream. With continuous oversight from Detect AI, organizations gain behavioral monitoring that traditional tools cannot provide. And with AI Cards, enterprises can maintain auditable documentation of model lineage, testing, and governance decisions.

The goal isn’t to eliminate dependency. It’s to make dependency defensible.

Bottom Line

The AI supply chain is easy to compromise because it is complex, distributed, and constantly evolving.

Every dataset, pretrained model, open-source component, and third-party API adds value. Each also extends your trust boundary. When compromise occurs, it doesn’t always break systems—it quietly reshapes them.

Organizations that treat AI components as black boxes will struggle to manage that risk. Those who invest in visibility, testing, and governance will be positioned to scale AI responsibly.

AI doesn’t just introduce a new attack surface. It introduces a new supply chain. Understanding it is the first step. Securing it is the next step.

Explore how Cranium helps enterprises govern and secure AI systems across the supply chain:

https://cranium.ai